When it comes to the optimisation of your website you should always be looking for unique ways to gain an advantage and improve your rankings. One of the best ways to improve your SEO search engine optimisation rankings is to get into the mind of the Googlebot.

The Googlebot is a web crawler that looks through your website and sends the data back to Google to determine your rankings.

Each major search engine has their own web crawler, but for the purpose of this article we will be focusing on the Googlebot (as Google is completely dominate in the search engine industry). The Googlebot looks at the sitemap you have submitted to your Search Console and crawls those pages. As well as this, internal linking is another way Googlebot picks up on your pages.

The Googlebot is certainly a lot more advanced compared to a decade ago. Its goal is the look at a page in the same way as a user would. This is why it is important to optimise your website for both the Googlebot as well as users. It is all about finding the right balance between a well designed website and a highly optimised one.

The best ways you can optimise your website in order to improve your search presence in Google:

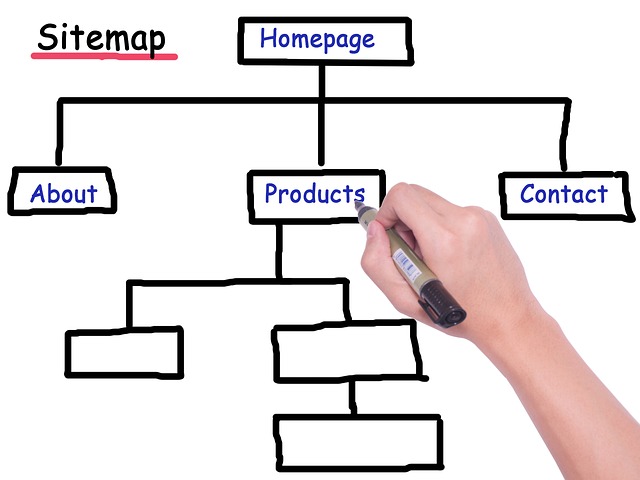

1. Sitemaps

As explained in the introduction, sitemaps are the main way the Googlebot can find all the pages on your website. There are a few things you can do to ensure the Googlebot has the easiest experience when reading your sitemap. Here is an example of a sitemap.

Firstly, you should only have one sitemap index, you can have other categories of sitemaps, but only one index.

You shouldn’t mark every page in your sitemap as high priority as this may confuse the Googlebot.

You should also look to remove any 404 errors or 301 redirects from your sitemap. Make sure you are checking on your submitted sitemaps in Search Console regularly to ensure there aren’t any new issues that arise.

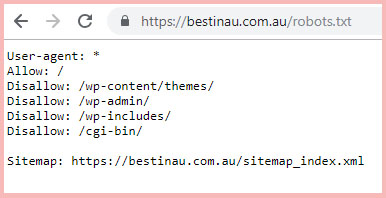

2. Robots.txt

As well as your sitemap, you should also have a robots.txt file. This is one of the first things the Googlebot finds when looking through your website. You should also look to include your robots.txt link in your sitemap.

Optimising your robots.txt file can be difficult so make sure you are careful when making changes. Make sure you do your research before as a common error is to leave sitewide disallow in your robot.txt which will actually block all search engines from looking through your website which is obviously something you do not want.

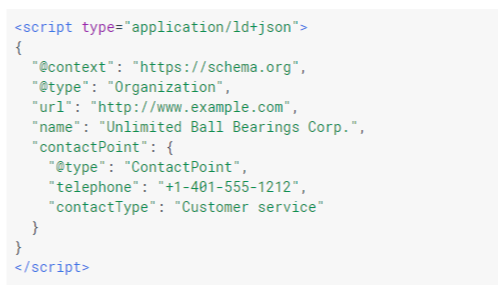

3. Schema

Schema and the use of structured data have certainly risen in importance over the past couple of years. Structured data is great because it helps the Googlebot understand the type of content on your page even better.

When implementing schema on to your website it is important that you follow the Google guidelines because they are known to slap websites with a penalty for not following the rules. As well as this, you should look to use JSON-LD when implementing because this is Google’s preferred option.

4. Images

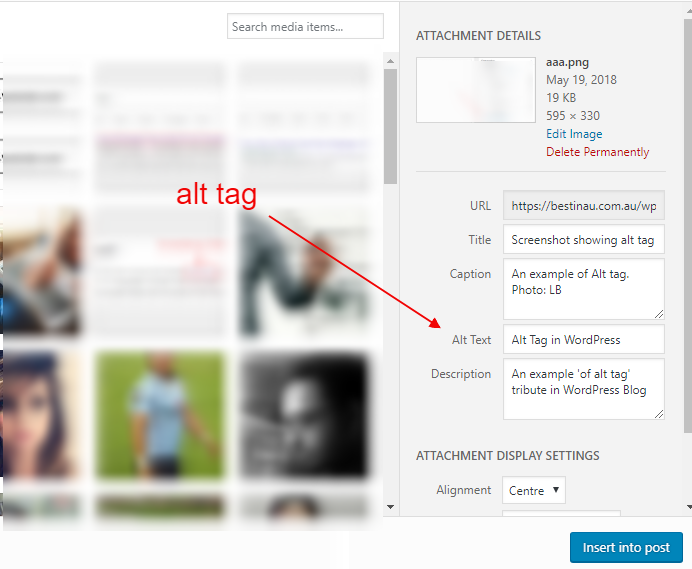

You can no longer deny the importance of images on your website, and now there has been extra importance placed on how well you are able to optimise your images. A well optimised image ensures the Googlebot can easily understand the content.

Remember the crawler doesn’t see the image the same was as a human does. So, you should aim to be as descriptive as possible when naming the file and including the alt text.

You should also add structured data to explain the images and include an image sitemap as well.

5. Site Speed

By now you probably already know just how important site speed is. From a completely user perspective, they are becoming increasingly impatient and just an extra second in load time can result in a significant percentage of users dropping off.

Speed is especially important for mobile devices and if the Googlebot detects slow load time they may push your rankings down.

It is therefore in your best interest to check Google’s PageSpeed Insights tool. Here Google shows you your speed for both desktop and mobile devices. Google also offers some suggestions as to how you can improve your speed.

6. Meta Data

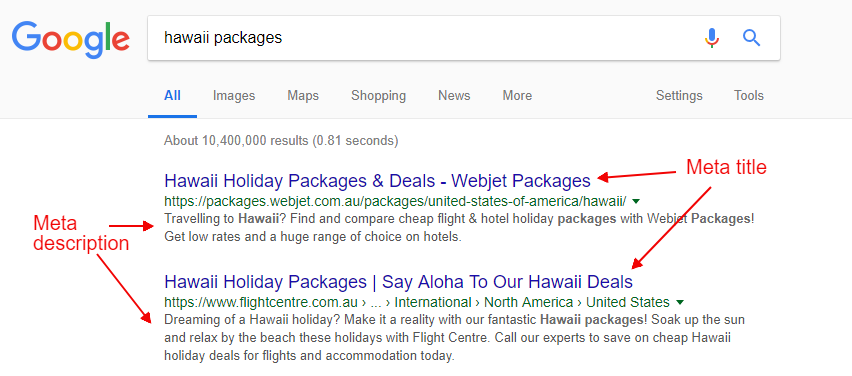

Meta data optimisation has been around for ages, but it is still a proven way that can improve your click through rate as well as your rankings. This is part of the SEO fundamentals, yet it is still sometimes overlooked.

Think about it; when was the last time you optimised your meta data? You should always be looking to change up your meta data, test what works and what doesn’t in terms of your click through rate.